This week I attended the Market Research Society’s event with the International Journal of Market Research (IJMR): The Alchemy of Insight: Technology, Hype, and the Search for Truth. The discussion focussed on a key question for researchers and research buyers over the last 18 months: Does the rise in usage of AI across industries mean researchers need to understand the fundamental process of research less, differently, or more?

After hearing from Professor Dan Nunan, Dr Arry Tanusondjaja, and Leo Paas, the consensus was clear: AI is not the silver bullet to solve all research challenges nor will it successfully replace human researchers. Because whilst technology evolves at breakneck speed, human behaviour does not. Additionally, the panacea of AI being the breakthrough for reliable data may end up in the beigification of the insight and analytics world. As the technology is being trained on cleansed, synthetic data that doesn’t reflect the messy real world.

But that doesn’t mean as researchers we can ignore this disruptive technology and keep to traditional techniques for research and analysis. To stay relevant in the new landscape; researchers need to “up their game” and move to a multi-discipline world where we work with AI technology to take advantage of the power of the technology. Whilst ensuring we use our human intelligence and experience to distinguish between robust insight and plausible hallucinations.

Distinguishing insight from a plausible hallucination

Professor Dan Nunan, Editor-in-Chief of IJMR, opened the session with a grounding reminder: predicting the impact of technology is a historically flawed endeavour. For the 80-year history of the MRS, the research industry has been having crisis after crisis about how to rigorously gather data and create meaningful insights.

Currently, the industry is grappling with “plausible” analysis. AI can generate outputs that look and feel like real research. This means they can easily be perceived and accepted as real research. This is a particular risk when the prompter using AI is not a research practitioner or a subject matter expert. AI can create documents that look, to the uninitiated, to be robust insight based on facts. But as AI lacks a Theory of Mind,it cannot distinguish between a genuinely insightful discovery and a hallmarked “hallucination” and so will present both with equal weight and confidence. Therefore, a human expert must review and validate these insights before they are used to make meaningful decisions.

Nunan’s critique of synthetic data was particularly striking and aligned with my colleague François’ recent article Synthetic data, confidence, and the risk of false certainty. While it offers speed, it lacks the “messiness” of the real world. In synthetic samples, subgroup relationships often collapse, and regression coefficients can even flip. If we rely on these tools without a deep understanding of mixed methods—knowing when to observe and when to ask—we risk making multi-million-pound decisions based on “beige” data that lacks the nuance and complexity of reality.

The potential decline of competitive advantage and uniqueness

Dr Arry Tanusondjaja, Senior Marketing Scientist, Ehrenberg-Bass Institute, pivoted the conversation toward the foundational “laws of growth” established by Byron Sharp and Andrew Ehrenberg. Whether it’s 1996 or 2026, the empirical laws such as Double Jeopardy Law and the Law of Buying Frequency remain constant. For example, small brands are still punished twice, and growth still comes from light buyers, not just loyal consumers.

Organisations want to get ahead of the competition and the new Generative AI tools may give the illusion of providing a competitive advantage to a brand. However, the danger of Generative AI, as Tanusondjaja noted, is the “beigification” of insights. If every brand uses the same LLMs for content and research, the unique competitive advantage disappears into a sea of averages.

Therefore, for a brand to really stand out today and use research and analysis to its advantage. They must spot the outliers that AI will normalise and ignore. That way we can ensure that we are not merely analysts processing data, but researchers uncovering the “significant differences”, that 1% of data where the next market disruption that drives growth actually lives.

Maturity, the subject matter expert, and multi-disciplinary research teams

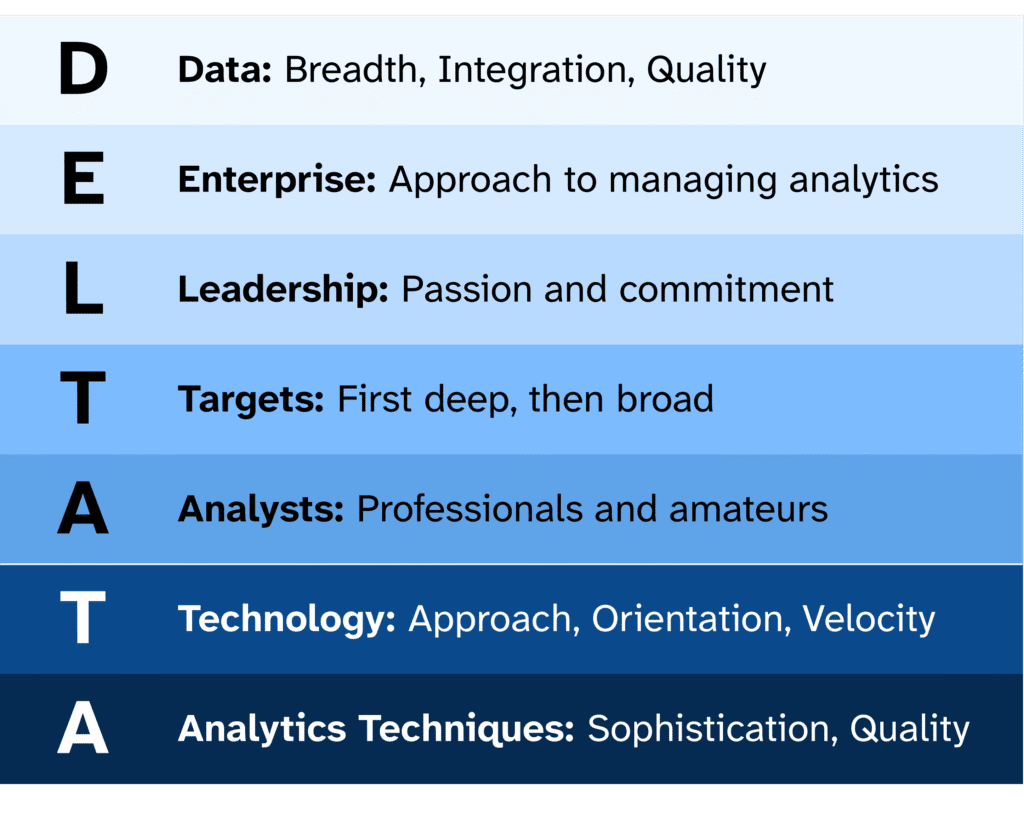

Finally, Leo Paas,Professor of Marketing at the University of Auckland addressed the structural reality of organisations research and analysis maturity today. As researchers we may be panicking that organisations can in-house all our skills and experience using AI Agents. But the reality is that many companies are still struggling with basic data hygiene. Using the Delta Plus Model, Paas highlighted that AI could enhance every stage of analytical maturity, but only if a human understands the domain.

This is because whilst an algorithm might maximise a long-term goal, it cannot judge if a tool is fundamentally wrong or if a prompt is embedded with leading questions. Again, it can’t distinguish between a real, fact-based answer and a plausible hallucination.

Therefore, to ensure organisations can take advantage of the speed and computational power of AI with minimal risk there must be a human working as an expert with the AI. This is vital from a business and ethical point of view: To avoid the unintended consequences and ensure inclusivity of our work we must validate automated decisions with human experience to ensure we aren’t forgetting minority groups or niche behaviours that the AI, trained on the majority and asked to predict the norm, might ignore.

Finally, Professor Paas reminded us that AI isn’t coming for our jobs. It’s a just a tool that can enhance our work if used correctly. Societally, we have given AI a level of agency that it should not have. It is not an actor in the system. We are the actors and it is our responsibility to use and train the tooling both ethically and effectively.

My Verdict: Researchers will need to understand more, and work differently

I must acknowledge my confirmation bias with the speakers. I agree that Gen AI is a tool which is potentially making our world a lot beiger and blander. And I believe we are entering a phase where the researcher must be more rigorous than ever and research teams must be multi-disciplinary to provide a competitive advantage. But I also agree that Gen AI is a powerful tool that if harnessed correctly can help us solve complex problems, faster.

Perhaps it is from working in cross-functional user centred teams for over 16 years but I believe that people with different skills and experiences produce better results when they work together on the same challenge. And research is no different. In my mind, the difference – and huge opportunity for the future – is adding specialist AI Agents to cross-functional teams to do the heavy lifting.

So, my answer to the original question: As researchers we will need to understand the research process more to deploy more sophisticated mixed method approaches and because we are the only ones capable of catching the bias, the hallucinations, and the “plausible lies” of automation. If researchers embrace this new world and work together differently, with analysts, technologists and AI agents we will be able to do more than ever before to empower humans, and automated systems, make better decisions based on facts, not hallucinations.

To conclude, I think the future of research is looking bright and is exciting. Today, AI is a powerful tool for efficiency but a high-risk approach if left unmanaged. The future of research is not about choosing between traditional techniques and AI, it’s about combining both Human Intelligence and Artificial Intelligence in a rigorous, multi-disciplinary framework.